ActiVis

Active Vision with Human in the Loop for People with Vision Impairment

About the Project

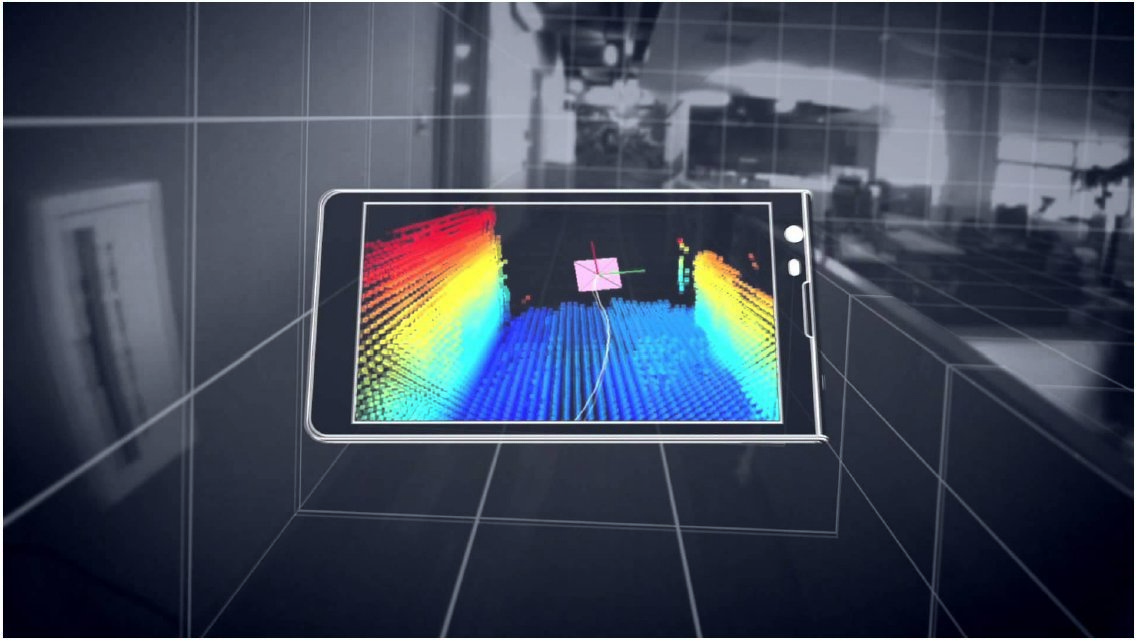

The research proposed in this project is driven by the need of independent mobility for people with visually impairment. It addresses the fundamental problem of active vision with human-in-the-loop, which allows for improved navigation and object localisation experience with a handheld camera. This is particularly challenging due to the unpredictability of human motion and sensor uncertainty. While visual-inertial systems can be used to estimate the position of a handheld camera, often the latter must also be pointed towards observable objects and features to facilitate particular navigation tasks, e.g. to enable place categorization. An attention mechanism for purposeful perception, which drives human actions to focus on surrounding points of interest, is therefore needed. This project proposes a novel active vision system with human-in-the-loop that anticipates, guides and adapts to the actions of a moving user, implemented and validated on a mobile device to aid the indoor navigation of people with vision impairment.